written by Dr. Jirawat Tangpanitanon, CQT & QTFT

reviewed by Supharat Ridthichai, Venture Lead, Smart Electricity, PTT Innovation Lab (ExpresSo)

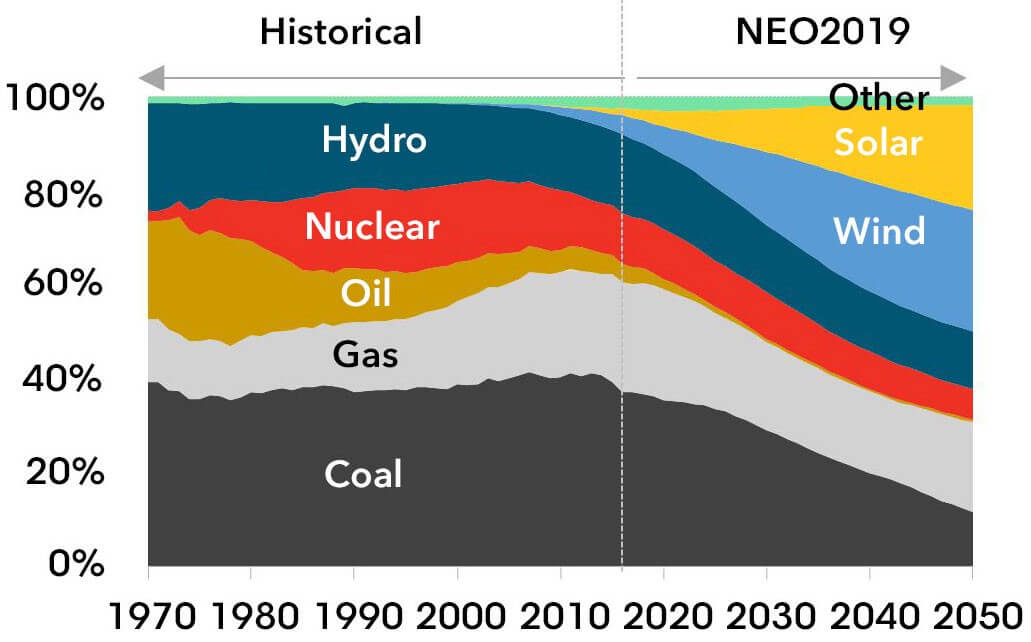

The world’s energy consumption is growing about 2.3% per year according to Energy Information Administration and is expected to reach over 700 quadrillions Btu a year in 2040. Although Fossil fuels have been primary sources of the world’s energy, alternative renewable energy resources are urgently in need due to the environmental concern and the finite resources of the former. The most widely used renewable energy resources to date are hydro, wind and photovoltaic.

According to Bloomberg New Energy Finance, wind and solar is predicted to supply 50% of the world’s energy consumption by 2050. Approximately 10 trillions of new investment is expected to go to renewable energy between now and 2050

Energy system optimisation

The rising demand for renewable energy calls for highly-optimized energy management systems. Although renewable energy resources are ‘free’, they are hard to predict because of various factors such as fluctuation of solar irradiation, weather and wind speed.

Recently, hybrid power systems utilizing the combination of solar, wind and hydro have been deployed to improve their reliability. The scale of which can range from a small unit supplying power for a single home to a large unit that can power a village or an island.

Optimization methods are then used to find the cheapest combination of all power generators (both renewable and conventional) and the storage capacity that can support the expected demand with the minimum acceptable level of security. The models must also include meteorological data, solar, hydro, wind and battery systems.

Hybrid energy production systems

Renewable energy also naturally leads to distributed energy resources(DERs) such as rooftop solar PV units. DERs may include non-renewable generation, battery storage, electric vehicles, and other home energy management technologies involving internet of things in Smart Home. Optimization methods are needed to support the network or “smart grid” operation, i.e. finding the perfect balance between reliability, availability, efficiency and cost. Grid optimization ranges from power generation, transmission, distribution all the way to demand management.

Doing such optimization on a large scale is computationally expensive. For example, only finding the optimal number of power generation units for a given demand requires a computational time that grows exponentially with the number of variables in the model.

This means that, to find the best smart grid operation, the required computational resource will double every time a new node is added into the network. In practice, one likely has to settle for solutions that are not globally optimum.

The challenge of large-scale optimization is also faced in other areas of the energy industry. For example, optimizing shale-gas supply chain networkcovering around 10,000 km square area may involve more than 50k variables and 50k constraints. A state-of-the-art supercomputer may take more than 15 hours or days in some cases to find a desirable solution. This high computational cost limits the efficiency of energy system optimization in national and global scales.

Quantum computing

Quantum computing provides a new paradigm to solve complex optimization problems. A quantum computer processes information differently than a conventional or ‘classical’ computer in two fundamental aspects.

First, unlike a classical processing unit or a bit which can be either 0 or 1, a quantum processing unit known as a qubit can be 0 and 1 at the same time. The latter allows a quantum computer to simultaneously explore different solutions to a given problem before collapsing to the optimum one when measured.

Second, two or more distant qubits can immediately ‘feel’ what happens to the other qubits without sending any signals. The phenomenon is known as quantum entanglement. The latter is a must ingredient for quantum speedup.

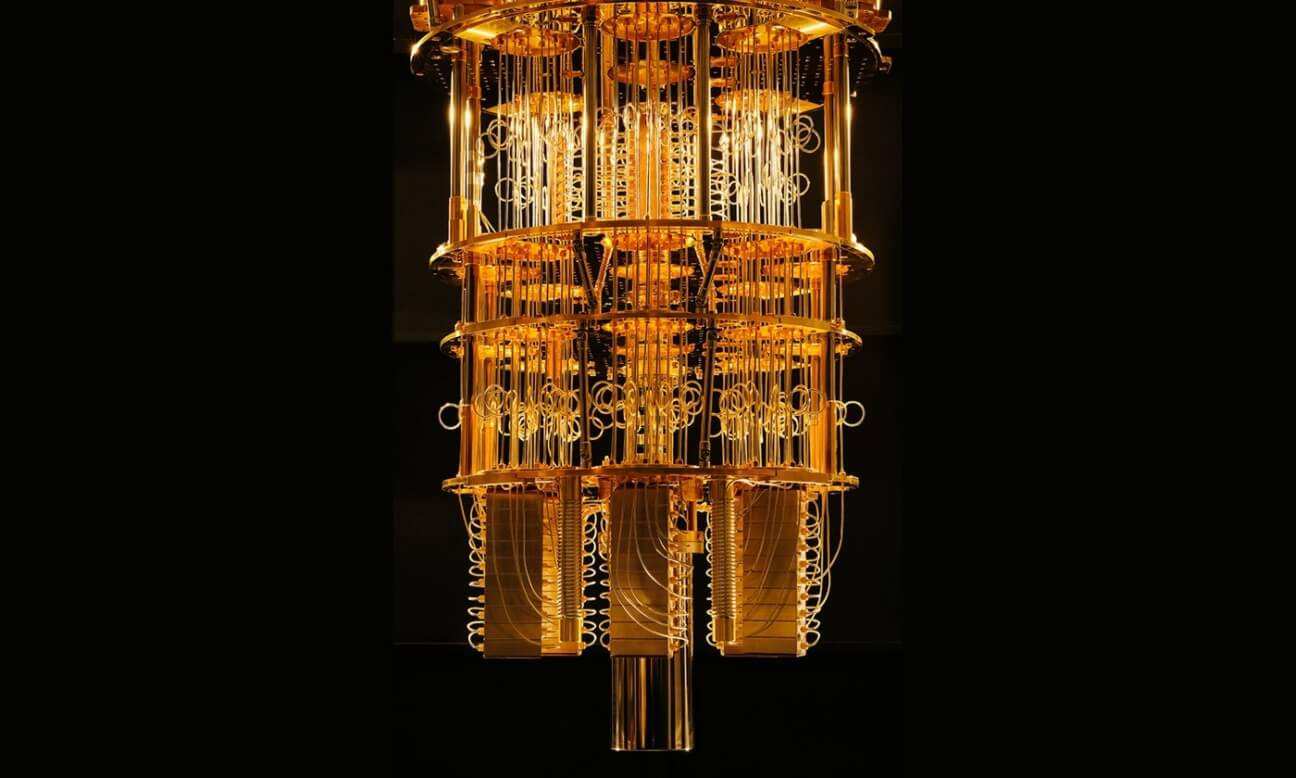

Building a full-scale quantum computer is, however, far from trivial. Quantum hardware is extremely sensitive to noise. Most quantum hardware architectures require the qubits to be cooled down to sub-milli-kelvin, much colder than the outer space.

A dilution refrigerator used to cool down superconducting quantum processors to milli-Kelvin.

Despite the far-reaching goal, tremendous progress has been made during the past two decades including Nobel-winning experiments. In the past few years, governments worldwide and giant tech companies such as Google, IBM, Microsoft, Alibaba, and many others have been investing heavily in quantum computing. Examples include 2 billion-euros quantum technology European flagship, 10 billion-USD china’s quantum center, and 1.2 billion-USD US’s quantum computing bill.

Quantum computing for energy system optimization

The promise of quantum computing on energy system optimization also attracts a lot of attention from the energy sector. For example, in January 2019, a giant gas company ExxonMobil has signed an agreement with IBM to develop next-generation energy and manufacturing technologies using quantum computing.

In April 2019, U.S. Department of Energy announced a plan to provide $40 million USD for developing new algorithms and software for quantum computers. The same department also announced $37 million USD this month for materials and chemistry research in quantum information science.

Recently, two scientists from Cornell University have conducted a systematic study of quantum computing for energy system optimization problems. Their results are published in Energy, July 2019.

As a proof of concept, the authors employ IBM’s and Dwave’s cloud quantum computing platforms to solve simplified problems in various areas of the energy industry including facility-location allocation for energy systems infrastructure development, unit commitment of electric power systems operations, and heat exchanger network synthesis.

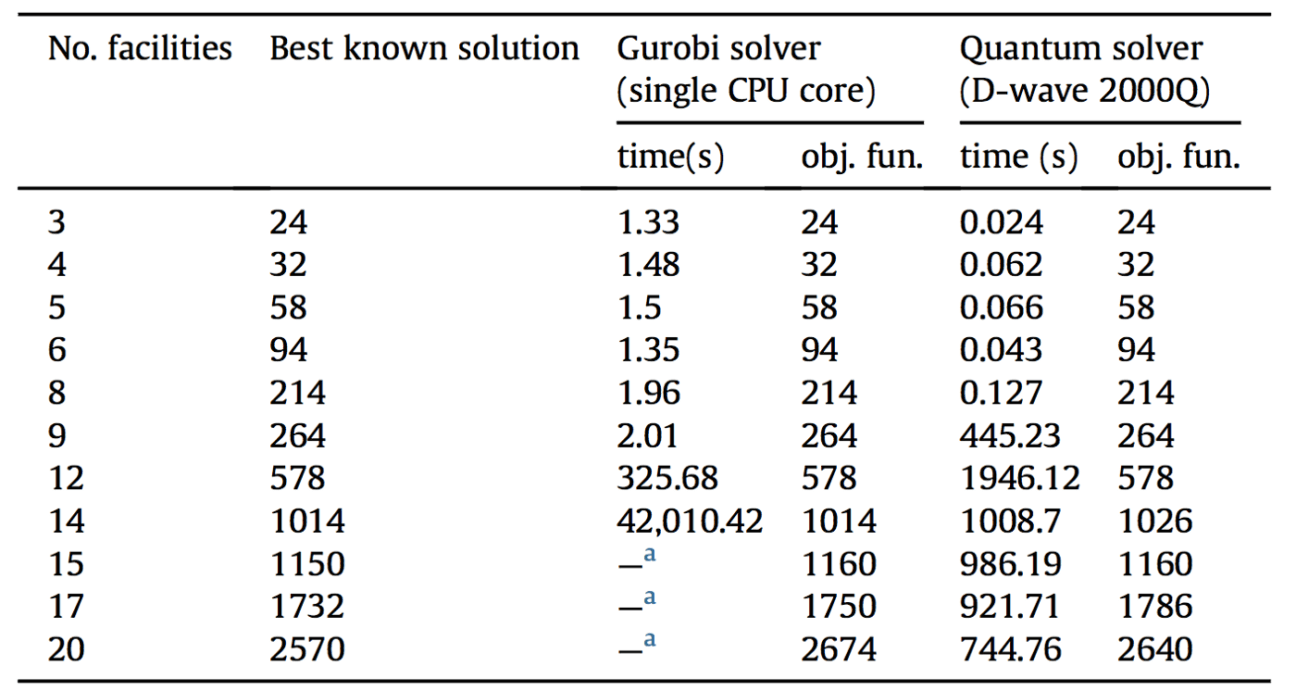

Dwave’s quantum processors

For the facility-location allocation problem, the goal is to find optimum locations of facilities such as solar or wind power farms that minimize facility opening and transportation cost for given energy demand and resource availability.

This problem is mapped to what is known as a quadratic assignment problem. The latter is difficult to optimize with a classical computer. The table below shows the runtimes of a single CPU core and D-wave’s quantum processor for different numbers of facilities. It shows that the computational time for the former grows exponentially with the number of facilities. However, it is not the case for the latter. For 14 facilities, the single CPU core takes more than 11 hours to run, while the Dwave processor only takes 16 minutes.

A. Ajagekar, F. You, Energy 179 (2019) 76–89

This quantum speedup provides promising preliminary evidence of the potential of quantum computing on the energy industry. However, for other applications, the authors find that the D-wave quantum processor does not provide quantum advantage due to the noise and the limited connectivity of the current quantum hardware.

As mentioned above, quantum computers are still in their very early stage, perhaps analogous to the vacuum-tube era of nowaday computers in the 1950s. However, the field is evolving fast and the promise is high. Industries and professionals should, therefore, keep track of all relevant information to make strategic choices.